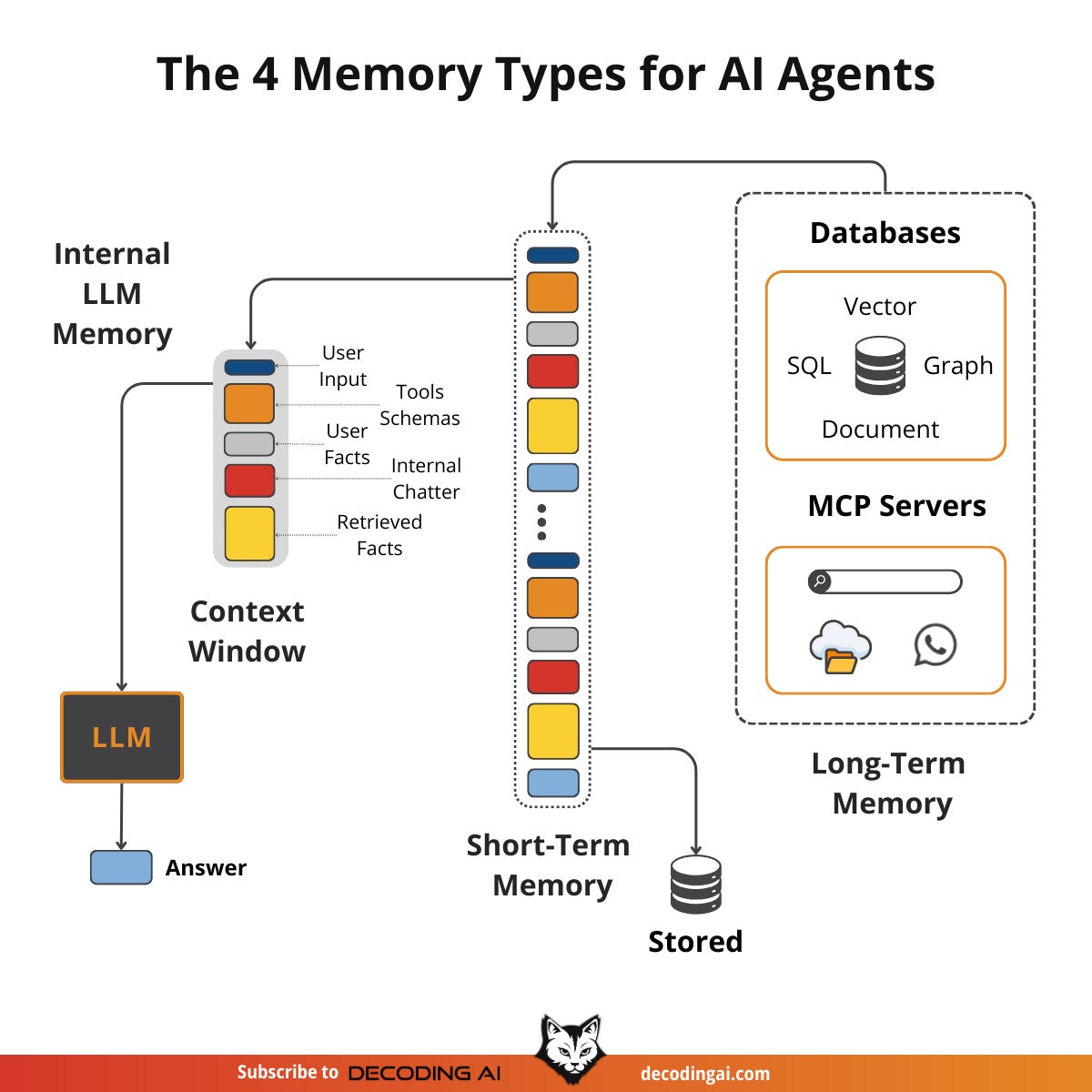

Memory Layer

Key Innovators and Platforms

- Zep: Builds temporal knowledge graphs for session memory, integrating with LangChain/LangGraph; delivers +18.5% accuracy and 90% latency reduction for production pipelines. [ussb0b]

- Other notables:

- LangMem (summarization for context limits),

- Memary (knowledge graph-centric),

| Platform | Core Architecture | Key Strength | Ideal Use Case |

| Mem0 | Vector + Graph + KV | Adaptive, personalized recall | Long-term agent personalization |

| Zep | Temporal Knowledge Graph | Low-latency scaling | Production LLM apps |

| Letta | Self-editing external store | Stateful local agents | Developer-deployed persistent bots |

| LangChain Memory | Buffer/summary/vector modules | Flexible integration | Multi-agent workflows |

|

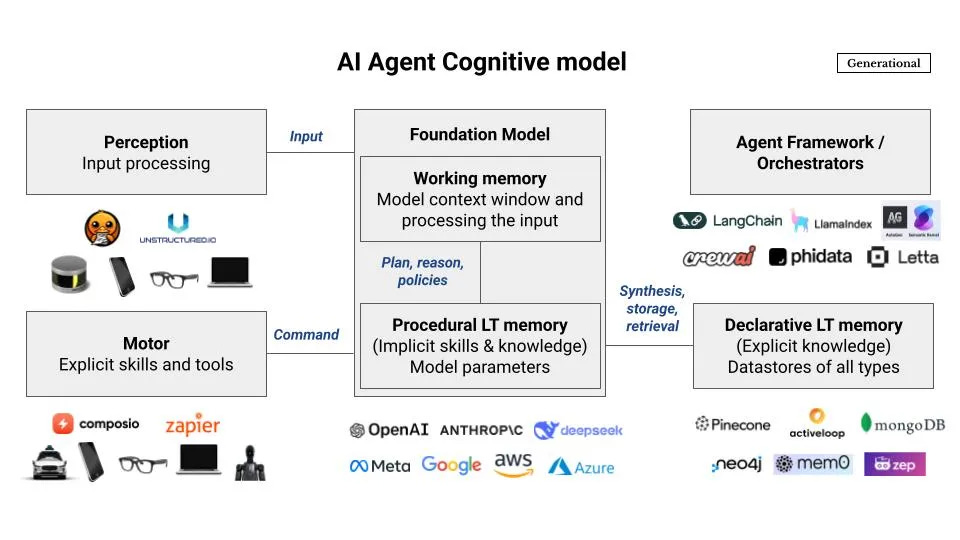

Why Agents Need a Memory Layer

Citations

[ussb0b] 2026, May 01. AI Memory Layer: Top Platforms and Approaches - Arize AI. Published: 2025-10-15 | Updated: 2026-05-02

[x20p8s] 2026, Apr 26. Mem0: Building Production-Ready AI Agents with Scalable Long .... Published: 2025-04-28 | Updated: 2026-04-27

[agacs4] 2026, Apr 25. Mem0 - The Memory Layer for your AI Apps. Published: 2026-04-21 | Updated: 2026-04-26

[y8kgfk] 2026, Mar 30. What Is AI Agent Memory? | IBM. Published: 2025-03-18 | Updated: 2026-03-31

[vedwh1] 2026, May 01. Memory in AI Agents - by Kenn So - Generational. Published: 2025-02-21 | Updated: 2026-05-02

[ro18hm] 2026, May 01. A Unified Memory Core for Enterprise AI Systems - Oracle Blogs. Published: 2026-03-23 | Updated: 2026-05-02

[4xxm5z] 2026, May 01. Agents that remember: introducing Agent Memory. Published: 2026-04-17 | Updated: 2026-05-02

[j1n39w] 2026, Mar 21. GitHub - mem0ai/mem0: Universal memory layer for AI Agents. Updated: 2026-03-22